It’s been over three years now since I published a lengthy dismantling of the very bizarre “No Estimates” movement. My four-part series on the movement marched methodically and thoroughly through the issues surrounding NoEstimates — e.g., what common sense tells us about estimating in life and business, reasons why estimation is useful, specific responses to the major NoEstimates arguments, and a wrap-up that in part dealt with the peculiar monoculture (including the outright verbal abuse frequently directed by NoEstimates advocates at critics) that pervades the world of NoEstimates. I felt my series was specific and comprehensive enough so that I saw no reason (and still see no reason) to write further lengthy posts countering the oft-repeated NoEstimates points; I’ve already addressed them not just thoroughly, but (it would seem) unanswerably, given that there has been essentially no substantive response to those points from NoEstimates advocates.

However, the movement shows little signs of abating, particularly via the unflagging efforts of at least two individuals who seem to be devoted to evangelizing it full-time through worldwide paid workshops, conference presentations, etc. Especially at conferences attended primarily by developers, the siren song that “estimates are waste” is ever-compelling, it seems. Even though NoEstimates advocates apparently have no answer to (and hence basically avoid discussion of) the various specific objections to their ideas that people have raised, they continue to pull in a developer audience to their many strident presentations of the NoEstimates sales pitch.

So here’s my take: the meaty parts of the topic, the core arguments related to estimates, have indeed long been settled — NoEstimates advocates have barely ventured to pose either answers or substantive (non-insult) objections to the major counterpoints that critics have raised. For the last several years, then, the sole hallmark of the NoEstimates controversy has actually not been the what, but rather the how, of how the NoEstimates advocates present it: its tone, rhetoric, and (ill)logic.

With that in mind, it’s time to deconstruct a NoEstimates conference talk in detail. There are several such talks I could have done this with (see the annotated list at the end of this post), but I decided to choose the most recent one available, despite its considerable flaws. And by “deconstruct”, I’m going to look primarily at issues of gamesmanship and sheer rhetoric — in other words, I won’t take time or space to rehash the many weaknesses of the specific NoEstimates arguments themselves. As I’ve stated, those weaknesses have been long addressed, and you can refer to their full discussion here.

I’m arguing that at this point, the key learning to be had from the otherwise fairly futile and sadly rancorous NoEstimates debate is actually no longer about the use of estimates or even about software development itself, but really more about the essence of how to argue any controversial case, in general, effectively and appropriately. It’s an area where IT/development people are often deficient, and a notable case example of that is the flawed way that some of those people argue for faddish, unsupportable ideas like NoEstimates.

The NoEstimates conference talk that I’ll deconstruct here, given at the Path To Agility conference in 2017, is characteristic: in particular, it starts out setting its own stage for a “them against us” attitude; then, it relies on:

- straw man arguments and logical leaps

- selective and skewed redefinitions of words

- misquoting of experts

- citing of dubious “data” in order to imbue the NoEstimates claims with an aura of legitimacy.

I won’t go into depth on every single example of these from the talk, but will focus in on just a few representative snippets for each of these aspects, citing specific direct quotes, with time stamps, from the YouTube video available here, and capturing one or two of the slides available here.

“Them against us” attitude

0:04 “I like to gauge the audience. There’s more of you than there are of me. I like to know where the punch might come from. Where are my developers? That’s devs; all right, there’s my friends. Who’s in a PMO or in a project management role? Oh… OK… OK.” (shows mock trepidation).

It’s really notable: the speaker divides the audience right at the start, expressing an outright “them against us” stance.

2:25 “Hashtag NoEstimates… I’m not responsible for what happens if you tweet to this hashtag. You have been warned. It’s a very good discussion; sometimes it’s not so good.”

The speaker somehow feels the need to warn the audience against posting to the Twitter hashtag of #NoEstimates. He gives no specifics, just vague but dire warnings. All of that is especially odd, given the frequent declarations of #NoEstimates advocates of the importance of collaboration and trust.

Straw man arguments and logical leaps

15:49 “Conversation around the estimate could be valuable… which means the estimate is not valuable.”

What? The fact that one can hold useful conversation about something somehow makes that thing itself not valuable? That’s quite a leap.

18:10 “Magic numbers happen in a lot of ways…. Padding the numbers by 20%, why not 40%. That’d be too high, they’d never accept it… Or Excel gymnastics… A spreadsheet that padded a number, you fill in the effort, talk about the number of people impacted, etc., through creative Excel gymnastics, you got a number that was so inflated you could never be wrong. This will take twelve months, but we’re just adding a line of code.”

As one frequently tweeted quip states, “please stop using really poor examples of ____ to illustrate why ___ doesn’t work.” What extreme depictions of estimating such as “Excel gymnastics” actually illustrate, from this speaker, is an inability to understand or recognize how estimates can actually be used appropriately and productively. The analogy I always use: countless photos of twisted metal car wrecks do not make a convincing case for #NoCars. Similarly, if you dismiss all estimates as “magic numbers”, you probably need to read up on the many useful, well-known, non-“magic” ways to estimate.

21:12 “Here’s the drop-the-mic moment. 17% of large IT projects go so badly that they threaten the existence of the company. We’re taking a guess and we’re making a huge bet, and 17% of the time we could actually close the doors of our company, because we were just so far off.”

So, note how the speaker deliberately links major project disasters specifically and solely to the bugaboo of estimating (“taking a guess”): in short, without any specific knowledge or consideration of actual root cause, the speaker is reflexively blaming the use of estimates for those extreme project failures, across the board. Again, quite the leap.

25:05 “Who’s familiar with the Farmers’ Almanac? It predicts weather. It’s created 18 months ahead of time. It’s about 50% right… You’re better off just looking up at the sky.”

In what world are careful, methodical, facts-based estimates, made by software professionals regarding level of effort of proposed work items, equivalent to the Farmer’s Almanac? That’s a straw man, a mere derisive comparison. It’s surprising the speaker didn’t also trot out the example of Punxsutawney Phil.

Selective and skewed redefinitions of words

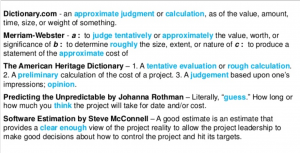

Watch the segment beginning at 9:07 carefully; it’s quite representative of the disturbing rhetorical flourishes throughout the talk. The speaker first polls the audience about what estimates are, pulling out selected comments that promote his targeted definition of estimates and scoffing at any that don’t. Then, he puts up a prepared slide (10:31) with five definitions of “estimate” taken from various sources.

Although only one of the five definitions (the one from a declared NoEstimates advocate) actually uses the word “guess”, the speaker then peremptorily declares to the audience, in front of their very eyes, that the definitions state that’s what estimates are: just guesses:

11:03 “So essentially, through all credible sources, we can distill it down to “guess”. Is that fair, does anyone want to push back on that? <two second pause> All right, so we all agree that an estimate is a guess.”

The rest of his entire presentation then becomes predicated on this flimsy foundation, this rhetorical sleight of hand, that an estimate is nothing more than a guess. He repeats it in various ways, often sarcastically, for emphasis throughout the talk: e.g., “estimates are guesses; we’re guessing and we’re wrong a lot; we suck at guessing.” Let’s look at a number of specific examples:

-

12:45 “Why do we need estimates if they’re just a guess?”

-

13:13 “Make pretty charts, that’s important; so someone can validate their guess. So we’re gonna predicate a guess on a guess. I like that.” [said derisively]

-

14:12 “So in order for executives to make decisions, we’re going to hand them a guess. And they’re gonna make bets about our company. You’re laughing because it kind of sounds absurd, doesn’t it.”

-

14:30 “Typically estimates are required at the fore because we need to make decisions, and we believe that a GUESS is a great way to make a decision.”

-

19:28 “You’re taking a guess, adding another guess of a confidence interval. And I’m supposed to accept it.”

-

21:46: “We’re telling our leaders to make big bets on software with GUESSES. Not only that, 80% of the time we just FAIL. 17% of those failures could close our doors. Is this how you want to run a company? Who is actually in favor of this? Not a single hand up.”

Again, all of these statements leverage and reinforce the speaker’s predetermined skewed definition and premise, i.e., that estimating is just guessing. Once you accept that extreme premise (see Layne’s Law), there’s no room for fruitful discussion of the many ways that estimates actually help in software development scenarios.

Even in the Q&A period, when someone challenges the speaker’s definitions, he goes back to reaffirming what he has essentially browbeaten the audience into supposedly “agreeing” on:

44:05 “Let’s say I’m trying to get a million dollars out of you to do a project. Anything I hand you, we’ve agreed it is a guess. That’s a premise that didn’t get a lot of challenge.”

So we see, amidst the intentionally skewed definition, there are multiple instances of repeated straw man logic, coupled with what is frankly just blatant audience manipulation.

Misquoting of experts

19:46 “Steve McConnell — he provides what a good estimate is. What he says is, a good estimation approach should provide estimates that are within 25% of the actual results 75% of the time. How does that sound to you? Give me $100K. 75% of the time, I’ll hit your ROI within 25% plus or minus. The rest of the time I could lose it all, plus 10x, or I could win it all plus 10x. Who wants to give me $100K? No one in this room. But this is what we say is a good estimate, and this is what we say a company should use to invest.”

This is a rambling, agenda-laden mishmosh of extreme and unrealistic risk scenarios (lose it all plus 10x? Really?). Moreover, it’s intellectually dishonest. What he (mis)quotes is not even close to Steve McConnell’s point in his seminal book, Software Estimation. The speaker not only misreads the 25% / 75% description (which contains nary a mention of any “10x” factor), but he appears not to have finished reading the entire section, in which McConnell carefully spells out what he considers to be criteria for a good estimate.

In that section, McConnell mentions, as background, two common understandings of what a good estimate is, but then specifically says that the above criterion (“within 25% of the actual results 75% of the time”) is deficient: “an important concept is missing from both of these definitions–namely, that accurate estimation results cannot be accomplished through estimation practices alone. They must also be supported by effective project control.” (P. 11). After several more pages of discussion, McConnell ends his chapter with a paragraph helpfully and specifically titled “1.8 A Working Definition of A Good Estimate”, which, needless to say, is NOT what the NoEstimates speaker quoted. McConnell writes,

“With the background provided in the past few sections, we are now ready to answer the question of what qualifies as a good estimate.

A good estimate is an estimate that provides a clear enough view of the project reality to allow the project leadership to make good decisions about how to control the project to meet its targets. (P.14)

So, the speaker has cherry-picked a single isolated quote, misread it even, and then failed to notice that his cited source discusses that quote’s deficiencies and proposes something else as characterizing a good estimate.

Citing of dubious “data”

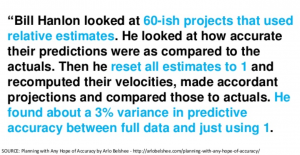

26:55 “You can abandon planning poker, story points, and roughly get the same outcome. So, a very smart guy, Bill Hanlon, he worked for Microsoft, so it’s large orgs buying into these ideas, right?”

Here’s the thing: this alleged study that the speaker cites, by Bill Hanlon at Microsoft, is unavailable. All internet references to it anywhere (and references to it are indeed quite popular by NoEstimates advocates), when you follow the links to try to chase the study down, are actually references to a single paragraph, 86-word, third-hand description of that study in an old blog post by, yes, yet another NoEstimates advocate! That paragraph reads:

“Additionally, Bill Hanlon (who also works at Microsoft) has data that shows estimates to be pretty much useless as long as the story sizes are in the roughly-linear zone. He looked at 60-ish projects that used relative estimates. He looked at how accurate their predictions were as compared to the actuals. Then he reset all estimates to 1 and recomputed their velocities, made accordant projections and compared those to actuals. He found about a 3% variance in predictive accuracy between full data and just using 1.”

Maybe this study happened, but it’s unpublished: we can’t look at it or examine its context, rigor, or methodology. It’s no better than the proverbial “well, I know a guy.” And its conclusions are certainly impossible to evaluate fairly, based solely on a partisan agenda-laden short description of them. But to NoEstimates advocates, since this third-hand 86-word description of it agrees with their stance, it’s utter gold; the speaker here uses it as a way to make sweeping statements like “it’s large organizations buying into these ideas” and “planning poker is a waste of your time.”

Bottom line

I’ve done this entire lengthy blog post without even discussing the weakness of the NoEstimates ideas themselves. As I said, those substantive points have been sufficiently covered for years now, essentially unanswered by the NoEstimates advocates (other than through blocking and insulting anyone who brings them up). The above deconstruction goes beyond those unanswered specific objections, by exposing the rhetorical and logic gaps of the movement as well: in short, a key takeaway here is that it’s not just that the NoEstimates ideas are faulty, but they’re very poorly argued as well.

In fact, I believe that those two characteristics are linked. Poor arguments generally tend to lead to poor argumentation style, because nothing that is actually substantive (e.g., logic, facts, valid data) is available to promote the idea on its own merits. Rampant reliance on illogic, selective redefinitions, misquoting of experts, and phantom data actually exposes how unsupportable the ideas themselves are. Solid ideas wouldn’t need such trickery to convince people of their merit. Deconstructing this NoEstimates presentation, as I’ve done above, underscores how at this point, people need to look at NoEstimates advocacy chiefly as a glaring case study in poor behavior and faulty argumentation style.

Lagniappe:

Other presentations on #NoEstimates, with interesting quotes:

-

- Allen Holub, “#NoEstimates” https://www.youtube.com/watch?v=QVBlnCTu9Ms

-

0:07 “My topic today is #NoEstimates. And what I mean by that is what that hashtag actually says, which is to say, we need to just stop doing all estimation now. Estimation has no value at all.”

-

0:32 “The main problem with estimates is that estimates are always based on guesswork. You’re always guessing.”

-

- Gerard Beckerleg, “How to Estimate in Software Development | #NoEstimates”: https://www.youtube.com/watch?v=bicBUVbeR58

- Allen Holub, “#NoEstimates” https://www.youtube.com/watch?v=QVBlnCTu9Ms

Earlier titles for this same video are quite revealing: “Do my requirements look BIG in this?” (in short, depicting business users as asking to be lied to) and “#NoEstimates – Stop lying to yourself and your customers, and stop estimating”

-

- Woody Zuill, “No Estimates: Let’s Explore the Possibilities” http://vimeo.com/79128724

-

1:28 “For those of you here who are living in a non-agile world, I have no hope for you.”

-

1:58 “The first time I talked about this, the audience … were so aggressively angry at me. And I enjoy that, by the way.”

-

- Johan Öbrink, “Why promising nothing delivers more and planning always fails” https://www.youtube.com/watch?v=Dh3VC-8yCgA

- Woody Zuill, “No Estimates: Let’s Explore the Possibilities” http://vimeo.com/79128724

The title on this one pretty much speaks for itself. One pictures NoEstimates advocates earnestly explaining to business folks how planning always fails.

It’s so full of misunderstanding e.g. 10:07 “estimates are determinism” shows how little he understanding the principles of estimating.

But the bigger issue – and we see this at the conferences I speak at on Space and Defense Integrated Program Performance Management, is the decision makers are not in the room. For our IPPM, we’re missing the Program Managers and Systems Engineers and only the Cost and Schedule geeks are there.

He points to the PMO, but no credible PMO cold do their job without estimates of cost, schedule, risk, and technical performance provided every week.

12:36 failure to understand the difference between Epistemic and Aleatory uncertainty. This failure can be found in other domains. Nuke Power and Manned Spaceflight know better but took them awhile. He has no foundation by which to discover how other domains have solved the issues – and there are many – with estimates. This is a simple failing of many in the NE world, they simply don’t know how to research a solution to a problem, and assume the first thing to comes into their head is the answer.

“It’s really notable: the speaker divides the audience right at the start, expressing an outright “them against us” stance.”

Argument by selective observation: IMMEDIATELY after the quote you selected, the speaker says, “We’re going to get along by the end. … I think at the end, we’re going to find a lot more congruence than we find disagreement.”

Perhaps you are correct, in that the #NoEstimates movement has poorly presented some of its arguments. However, it’s more amusing and telling that your very first retort to a quote from the talk is disingenuous at best. “Blatant audience manipulation,” indeed. Impossible to get past this hypocritical bit.

Thanks for commenting, “Joe”.

Let’s see: I didn’t somehow randomly choose to make my “very first retort” (as you put it) to be discussing the speaker’s “them vs us” attitude; I started with that because that’s literally where the speaker started, four seconds into the video. As I quoted, verbatim, he actually had an audience “show of hands”, expressly dividing them into who was likely to be on his side and who not. (Verbatim: “Where are my developers? That’s devs; all right, there’s my friends.”) Even though he invoked some lip service about “at the end we’re going to find a lot of congruence”, that doesn’t take away his opening salvo of blatant, undeniable “them vs. us” comments, including such references as “I like to know where the punch might come from,” etc.

In other words, “them vs us” is how the speaker himself decided to tee up his talk, and that undeniable fact is worthy of comment. Moreover, throughout the talk, he continued to refer back to these two camps. At 9:46, when someone in the audience gave him one definition of estimate that he felt was negative towards developers, he said, “Whoa, where are my devs?” At 13:25, in response to another audience member telling him something he didn’t like, he replied, “you’re in a PMO.”

So, I’m not sure how it makes any sense at all for you to accuse me of “audience manipulation”, let alone hypocrisy, when I point out the speaker’s own words at the start of his talk, and quote verbatim his multiple, direct, intentional statements designed to divide up his audience explicitly into friends and foes.

Agreed, Glen. As I basically said, the arguments themselves for #NoEstimates were countered effectively long ago. I could have spent the entire blog post talking about specific objections like that, but it just retreads old ground. I chose instead to zero in on the deception, illogic, manipulation, etc., which is kind of a meta layer above the actual issues.

A comment to Joe.

The: “We’re going to get along by the end. … I think at the end, we’re going to find a lot more congruence than we find disagreement” shouts of us-vs-them as well. It very clearly states that there exists a “we and you” here, just that hopefully we’ll get along anyway.

Glasses are both half-empty and full at the same time. I can’t change your perception of it. Not being strongly convinced by either extreme (noestimates vs heavyweight estimation processes), I see it as some of both.

Perhaps we should hearken back to the fact that the request for estimates, however necessary and well-intended, has largely fallen victim to truly damaging, abusive practice on the part of those asking for them, and as such has helped create this “us vs them attitude.” What could you (or we) do to help shrink that?

Putting some other shoes on:

– While you may view the noestimates folks as trying to create dischord, it’s not hard to put yourself in their shoes and see that they might honestly be trying to find a better approach to collaborating (despite their poor logic and use of rhetoric). Viewing every statement as something to attack, or even a lie, doesn’t help your concern about “we & you.”

– While they might view the heavyweight estimates folks as old-school and rigid, it’s probably not hard for them to understand why the business needs some information about what might be done.

Not sure what your point here is, “Joe”; it seems to be fairly unrelated to the point of this blog post, which was to point out, in detail, the misleading tactics (skewed definitions, misquoting of experts, use of phantom data) that are used by NE advocates to promote their cause, as reflected in one very recent conference presentation by an advocate. You appear to have nothing to say about those tactics, besides objecting to my characterizing the conference speaker as openly promoting a “them vs us” attitude, which he undeniably does (see my previous response).

My point was NOT that the NE folks are “trying to create discord”, but that they argue their case poorly by using these indefensible tactics. If they’re trying to find a “better approach to collaborating”, they should probably first work at NOT blocking their critics, NOT labeling us at every turn as “trolls, liars, morons, interlopers, box of rocks” etc, and instead actually engaging with some professionalism on the substantive issues. But they don’t.

By all means, if you have substantive and specific defenses of NE positions (something more substantive and specific than just claiming that critics “view every statement as something to attack”), by all means jump into the discussion and make those points, preferably without clinging to anonymity, and preferably on a different post (there are many to choose from) where the pros and cons of NE positions are the direct topic, unlike this one, which is focused on exposing the poor NE tactics overall.